Mastering mindsets: A guide to cognitive biases — Video-based lesson

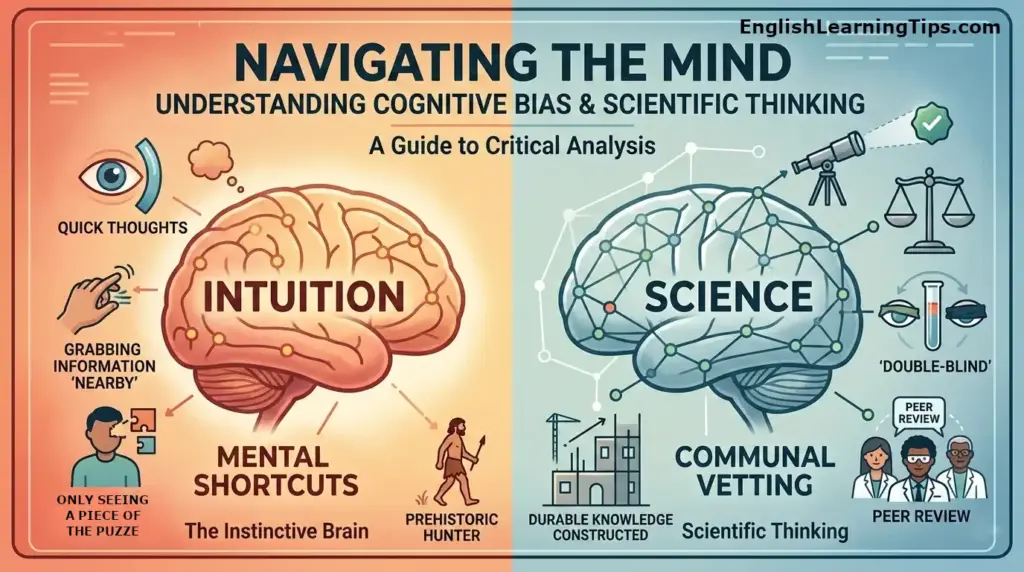

This lesson explores how our brains use mental shortcuts and how scientific thinking helps us overcome inherent biases. By understanding terms like cognitive bias, confirmation bias, and availability bias, students will learn to interrogate their own intuition.

Lesson plan: Navigating the mind: A guide to cognitive bias and scientific thinking

Level: Intermediate to Advanced (B2-C1)

Time: 60 minutes

Topic: Psychology, scientific method, and critical thinking

Objectives: To identify common mental shortcuts, understand the role of peer review, and practice discussing complex scientific concepts.

Background

Human evolution has gifted us with incredible pattern recognition skills. Thousands of years ago, these skills were essential for survival, helping our ancestors identify predators or distinguish between poisonous and edible plants. These mental shortcuts, or heuristics, allow our brains to solve problems quickly without expending excessive energy.

However, in the modern world, these same shortcuts can lead to cognitive bias. When we rely solely on intuition to answer complex questions—like the safety of air travel or the effectiveness of “learning styles”—our brains often fall prey to predictable weaknesses. Science is not just a body of facts, but a communal system designed specifically to test what is right rather than what “feels” right.

Want to dive deeper? To see these concepts in action, I highly recommend Daniel Kahneman’s Thinking, Fast and Slow—it is the definitive guide to how our ‘two systems’ of thought work.

Basic vocabulary

Introduce essential words related to scientific thinking and psychology to help students articulate how the mind processes information and where it might go wrong.

As there are many related vocabulary words, be sure that the students have the video and most of the vocabulary upfront, or consider dividing this into two lessons.

Vocabulary list

| Word | Part of speech | Conjugations | Definition | Example sentence |

| Bias | Noun | Biased (adj), Bias (verb) | A prejudice in favor of or against one thing or person. | Her bias toward visual learning made her ignore the new data. |

| Pattern | Noun | Patterned (adj), Patterning (verb) | A repeated decorative design or a regular way something happens. | Our brains are very good at finding a pattern in random data. |

| Intuition | Noun | Intuitive (adj), Intuitively (adv) | The ability to understand something immediately without conscious reasoning. | Don’t trust your intuition alone when looking at complex statistics. |

| Heuristic | Noun | Heuristic (adj) | A mental shortcut that allows people to solve problems quickly. | Using a heuristic helps us make split-second safety decisions. |

| Communal | Adjective | Community (noun), Communally (adv) | Shared by all members of a community; for common use. | Science is a communal process involving many researchers. |

| Evidence | Noun | Evident (adj), Evidentiary (adj) | The available body of facts or information indicating whether a belief is true. | Scientific thinking requires hard evidence rather than gut feelings. |

| Random | Adjective | Randomize (verb), Randomly (adv) | Made, done, or happening without method or conscious decision. | Participants were chosen at random for the medical trial. |

| Placebo | Noun | Placebo effect (noun) | A harmless pill or procedure prescribed more for the psychological benefit. | The control group received a placebo instead of the actual drug. |

| Durable | Adjective | Durability (noun), Durably (adv) | Able to withstand wear, pressure, or damage; hard-wearing. | Science builds durable knowledge that stands the test of time. |

| Interrogate | Verb | Interrogation (noun), Interrogator (noun) | To ask questions of someone or something closely, aggressively, or formally. | We must interrogate our universe to figure out how it works. |

🧠 Vocabulary: Types of cognitive bias

| Word | Part of Speech | Definition | Example sentence |

| Confirmation bias | Noun | The tendency to search for, favor, and recall information that confirms one’s pre-existing beliefs. | Because of confirmation bias, he only read news articles that agreed with his political views. |

| Availability bias | Noun | A mental shortcut that relies on immediate examples that come to a given person’s mind when evaluating a specific topic. | The availability bias makes people fear plane crashes more than car accidents because they are more vivid in the news. |

| Anchoring bias | Noun | The tendency to rely too heavily on the first piece of information offered (the “anchor”) when making decisions. | The initial high price of the car acted as an anchoring bias, making the slightly lower sale price seem like a bargain. |

| Hindsight bias | Noun | The inclination, after an event has occurred, to see the event as having been predictable, despite there having been little or no objective basis for predicting it. | After the stock market crashed, many claimed they saw it coming, a classic case of hindsight bias. |

| Dunning-Kruger Effect | Noun | A cognitive bias in which people with limited competence in a domain overestimate their abilities. | In the early stages of learning a language, the Dunning-Kruger effect can make students feel more fluent than they actually are. |

| Sunk cost fallacy | Noun | The phenomenon where a person is reluctant to abandon a strategy or course of action because they have invested heavily in it. | She stayed in the boring movie because she had already paid for the ticket, falling for the sunk cost fallacy. |

Vocabulary for extension

- Cognitive (adj): Relating to the mental action or process of acquiring knowledge (Noun: Cognition).

- Implicit (adj): Suggested though not directly expressed; unconscious (Adverb: Implicitly).

- Explicit (adj): Stated clearly and in detail, leaving no room for confusion (Adverb: Explicitly).

- Algorithm (noun): A process or set of rules to be followed in calculations or problem-solving (Adjective: Algorithmic).

- Skew (verb): To suddenly change direction or to bias information (Noun: Skew).

- Prevalent (adj): Widespread in a particular area or at a particular time (Noun: Prevalence).

- Contradict (verb): To deny the truth of a statement by asserting the opposite (Noun: Contradiction).

- Vet (verb): To make a careful and critical examination of something (Noun: Vetting).

- Diversity (noun): The state of being diverse; a range of different things (Adjective: Diverse).

- Flexibility (noun): The quality of bending easily or the ability to be easily modified (Adjective: Flexible).

Teacher tip: Keeping these terms visible helps reinforce learning. You can find high-quality Cognitive Bias posters for your classroom or office here to serve as a daily ‘mental speed bump’ for your students.

Teaching tips

- Word mapping: Have students create “word families” by grouping the nouns, verbs, and adjectives together to see how the roots change.

- Context clues: Read sentences from the transcript and ask students to guess the meaning of the bolded words based on the surrounding text.

Grammar: Hypotheticals and ongoing discovery

The second conditional: Use this to “interrogate” the past or challenge your current intuition.

- Structure: If + Past Simple, … would + verb

- Context: Use this for counter-factual thinking. “If my brain didn’t rely on heuristics, I would spend hours deciding what to eat for breakfast.”

The present perfect: Use this to describe the “durable” nature of science that continues today.

- Structure: Have/Has + Past Participle

- Context: “Researchers have discovered that learning styles are a myth, yet the belief persists.”

Advanced Tip: For C1 students, introduce the Third Conditional to discuss historical scientific errors.

- Structure: If + Past Perfect, … would have + Past Participle

- Context: “If they had used a double-blind study in the 1800s, they would have realized the treatment was a placebo.”

Useful phrases

Key phrases

- Fall prey to: To be vulnerable to or overcome by something (e.g., “Our brains fall prey to cognitive biases.”)

- Wait for it: A phrase used to create suspense before a reveal.

- The kicker is: Used to introduce a surprising or disappointing fact.

- Give it up for: A way to ask an audience to applaud or show appreciation.

- By that same token: In the same way; for the same reason.

Teaching tips

- Roleplay: Encourage students to use “The kicker is…” when telling a short story about a surprising discovery they made.

- Phrase matching: Create a worksheet where students match these idioms to their literal definitions.

Example conversations

Conversation 1: Basic description

Student A: Did you know that our brains use shortcuts called heuristics?

Student B: I didn’t know that. Why do we have them?

Student A: They help us solve simple problems quickly, so we don’t waste brainpower.

Student B: That sounds useful, but can they also lead to mistakes?

Conversation 2: Adding details

Student A: I’ve been reading about availability bias lately.

Student B: Is that why people are afraid of flying even though driving is more dangerous?

Student A: Exactly, because plane crashes are all over the news, so that information is more available.

Student B: It’s wild how the news and algorithms can skew our perception of risk.

Conversation 3: More advanced

Student A: I used to think I was a visual learner, but apparently, there’s no scientific evidence for learning styles.

Student B: Really? Why do so many teachers still talk about them then?

Student A: It’s likely due to confirmation bias; people ignore evidence that contradicts their existing beliefs.

Student B: That shows why we need communal vetting and double-blind studies to find the truth.

Teaching tips

- Substitution drill: Have students replace the specific bias mentioned in Conversation 2 with others like “anchoring bias” or “hindsight bias.”

- Shadow reading: Play the audio/video of the transcript and have students read along with the speakers to catch the natural rhythm.

Teaching strategy

Use the Inquiry-Based Learning approach. Instead of telling students what a bias is, present them with a “riddle” or a scenario (like the airplane vs. car safety) and ask them to explain why their “gut feeling” might be wrong. This mirrors the scientific process of interrogation.

Discussion questions for advanced students

- Which of these biases do you think is the most dangerous in the age of social media?

- How does communal vetting (from our previous list) help protect a scientific study from confirmation bias?

- Can you think of a time your anchoring bias affected a purchase you made?

60-minute lesson plan schedule

Step 1: The “Gut Feeling” Warm-up (5 minutes) Ask students: “Have you ever felt a ‘vibe’ about someone that turned out to be completely wrong?” Use this to define intuition vs. evidence.

Step 2: The Inquiry Riddle (10 minutes) Present a scenario: “Why do more people fear sharks (8 deaths/year) than cows (20 deaths/year)?” Let students brainstorm before introducing the term Availability Bias.

Step 3: Vocabulary & Cognitive Glitches (15 minutes) Review the primary and extension lists. Focus specifically on the difference between Implicit (unconscious) and Explicit (stated) bias.

Step 4: The Bias Hunter Activity (15 minutes) Use the “Bias Sorting Task” scenarios. In pairs, students must not only name the bias but explain it using the Second Conditional (e.g., “If the manager were being objective, he wouldn’t hire based on a university tie.”)

Step 5: Conversation & Roleplay (10 minutes) Perform the “Advanced Conversation.” Have students swap out the biases and practice the “Useful Phrases” like “The kicker is…”

Step 6: Personalization & Wrap-up (5 minutes) Each student identifies one “mental speed bump” they can use this week to stop a bias in its tracks.

Additional tips

- Cultural sensitivity: Acknowledge that “intuition” is highly valued in some cultures, so frame science as a complementary tool rather than a replacement for cultural wisdom.

- Visual aids: Use charts showing the actual statistics of car vs. plane accidents to illustrate availability bias.

- Adapt for level: For lower levels, focus on “patterns” and “shortcuts.” For higher levels, dive into “randomized controlled trials.”

- Technology: Use an online quiz tool (like Kahoot) to test students on the different types of biases.

Common mistakes to address

- Grammar: Using “evidence” as a countable noun (e.g., “an evidence” is incorrect; use “a piece of evidence”).

- Word choice: Confusing “random” (unplanned) with “arbitrary” (based on personal whim).

Example activity

Teaching Activity: The “Bias” Sorting Task

To help students internalize these, provide them with the following scenarios and ask them to “diagnose” the bias:

- Scenario A: A manager hires a candidate because they went to the same university, ignoring the candidate’s lack of experience.

- (Answer: Affinity Bias/Confirmation Bias)

- Scenario B: A person refuses to get a vaccine because they once heard a single story about a side effect, ignoring millions of data points showing safety.

- (Answer: Availability Bias)

- Scenario C: A student spends 10 hours on a failing project and refuses to start over because they don’t want those 10 hours to “go to waste.”

- (Answer: Sunk Cost Fallacy)

To make this interactive, I use the Critical Thinking Cards Deck during the substitution drills. It turns identifying biases into a competitive game that students love

Homework or follow-up

- Writing: Write a 200-word paragraph about a “story that made sense” to you in the past but turned out to be wrong.

- Speaking: Record a 1-minute voice note explaining the “placebo effect” to a friend.

- Research: Find one example of a famous “double-blind study” and be prepared to explain it in the next class.

Frequently asked questions about cognitive bias

Conclusion

Understanding how our minds work is the first step toward clearer, more scientific thinking. By recognizing the shortcuts our brains take, we can become better at analyzing the news, our social feeds, and even our own beliefs.

What cognitive bias do you think is the hardest to overcome? Let us know in the comments below and share this article with your fellow science enthusiasts!

Discover more from English Learning Tips

Subscribe to get the latest posts sent to your email.